AI Agents

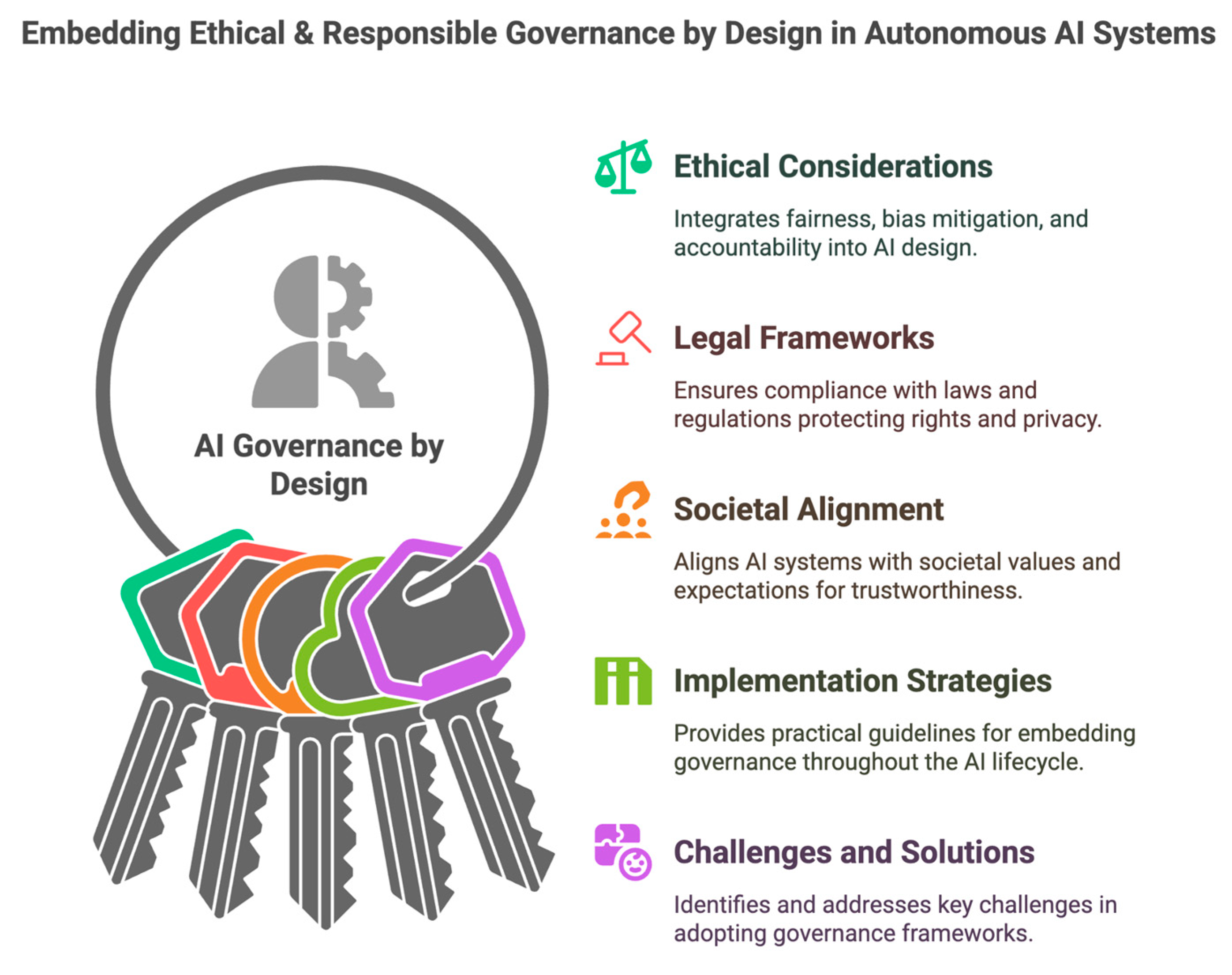

Benefits and risks for the public and private sectors AI agents are not just better chatbots. They are systems that combine language models with tools, memory, retrieval, and the ability to execute multi step actions across software environments. That combination gives them real productive potential for both the Greek public sector and private firms, but … Read more