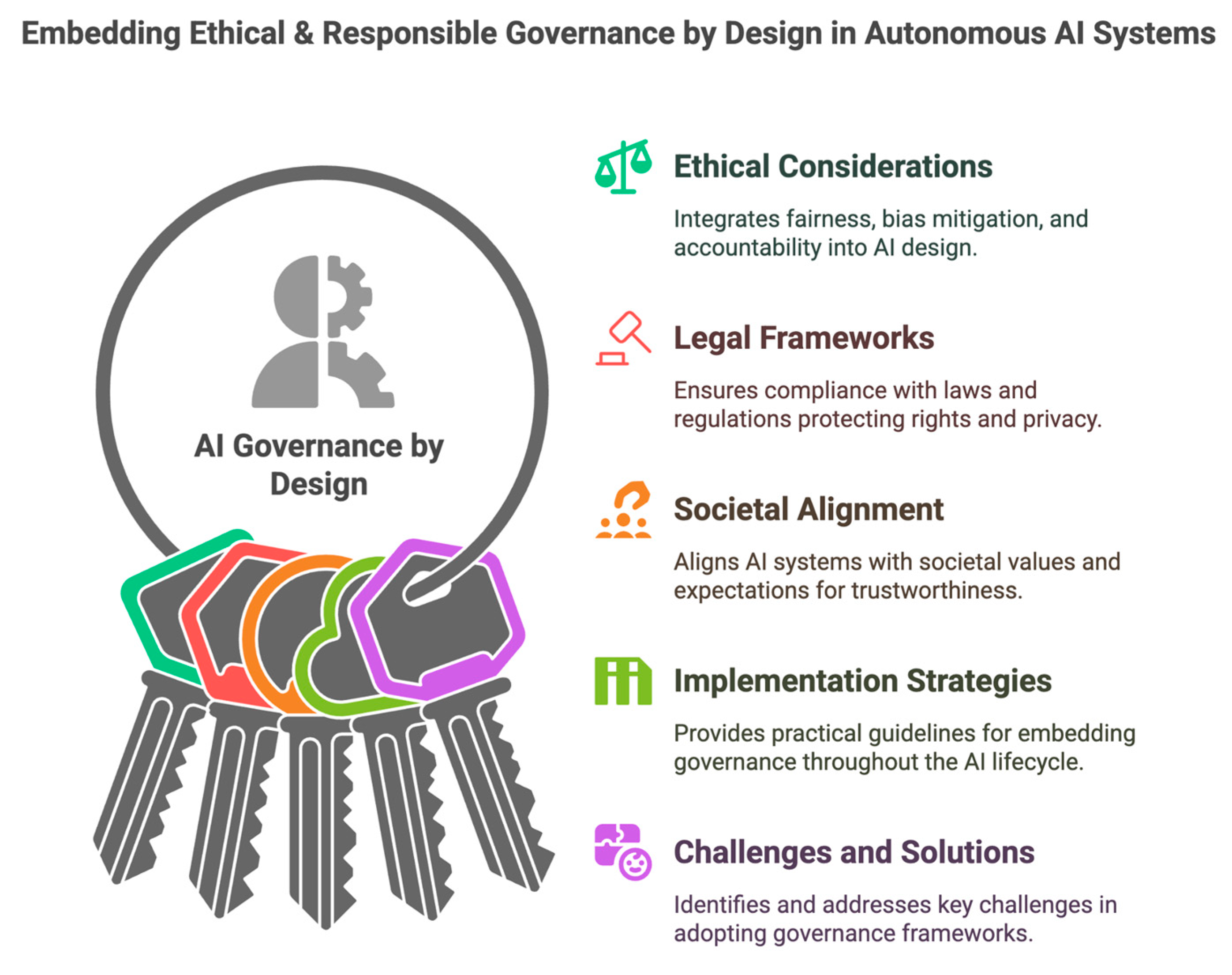

Large AI models should not be used in administration without guardrails, verification, and experienced human review

Fluency is not reliability Large language models create a dangerous illusion for both public administration and private organizations. They look like universal productivity engines: fast drafting, fast summaries, fast answers, fast recommendations. But speed and fluency are not the same as accuracy, accountability, or institutional reliability. A model can produce a polished paragraph and still … Read more