Convenience is not the same as learning

Large language models are now part of everyday life in education, work, and public communication. They draft text, summarize documents, suggest ideas, organize arguments, and respond instantly to complex questions. Their usefulness is obvious. But that usefulness becomes a problem when speed replaces effort, and assistance turns into substitution. The issue is no longer whether we should use these systems. The real issue is how to use them without allowing them to erode judgment, memory, and intellectual independence.

What makes these systems attractive is precisely what makes them risky. They remove friction. They reduce the time needed to produce something that looks finished. Yet thinking is not just the act of producing an output. Thinking involves uncertainty, trial and error, recall, comparison, hesitation, revision, and the slow internal work through which understanding is formed. When that work is outsourced too early, the result may still look polished, but the user has often learned less than the text suggests.

The danger is not only error, but also passivity

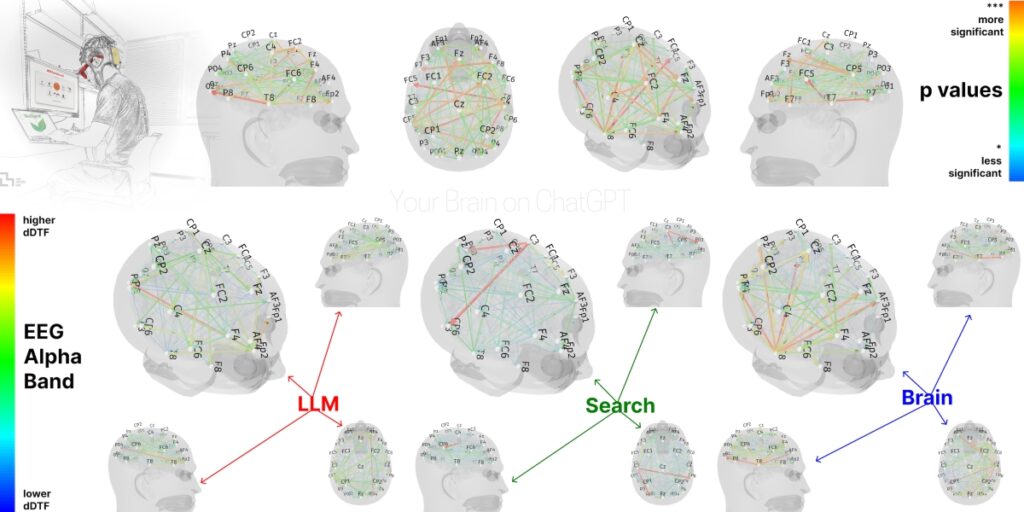

Much of the public debate focuses on hallucinations, bias, and unreliable citations. These are serious concerns, but they are not the whole story. A deeper problem appears when users begin to rely on the model not as a tool for support, but as a replacement for their own cognitive effort. In that case, the model does not simply help them write. It begins to structure their reasoning, select their vocabulary, narrow their range of expression, and reduce the need to remember or synthesize.

That is where the idea of cognitive debt becomes useful. The user saves effort in the short term, but the cost is deferred. It appears later, when they must explain an argument without assistance, defend a text they barely processed, or make a decision in a context where no instant machine response is available. What was gained in speed may be lost in understanding. What was gained in fluency may be lost in ownership.

Productive use begins after human effort, not before

Large language models can be highly useful when they are used after a first layer of human work has already taken place. They can help organize notes, suggest alternative phrasing, identify weaknesses in a draft, propose questions for further inquiry, or compare different versions of an argument. In these cases, the system supports reflection rather than replacing it. It functions as a second reader, an editorial assistant, or a structured prompt for revision.

The harmful pattern begins when users ask the model to generate the core intellectual content for them. It should not be writing the central argument, making the key judgment, or producing the first and final version of a piece that the user is expected to understand and own. In education, especially, the first draft often needs to be human. The student should struggle with the idea before asking the machine to optimize the wording. Otherwise, the visible output improves while the invisible learning process weakens.

Rules for responsible use

Responsible use of large language models requires a simple discipline. Use them to clarify, not to replace. Use them to challenge your reasoning, not to spare you from reasoning. Verify facts, references, and quotations independently. Disclose their use when transparency matters, especially in education, research, public administration, and journalism. Never upload sensitive personal, institutional, or confidential material casually into external systems. And most importantly, keep human responsibility at the center of every consequential task.

These systems are best understood as tools for augmentation, not delegation. The human user must retain the question, the standard of judgment, and the final accountability. Once that balance is lost, convenience becomes dependency. If that balance is maintained, large language models can still be valuable instruments for productivity and learning. The goal is not to reject them. The goal is to make sure that human intelligence remains primary, active, and in command.

Source of this article: https://glossapi.gr/