From better retrieval to answer inspection before delivery

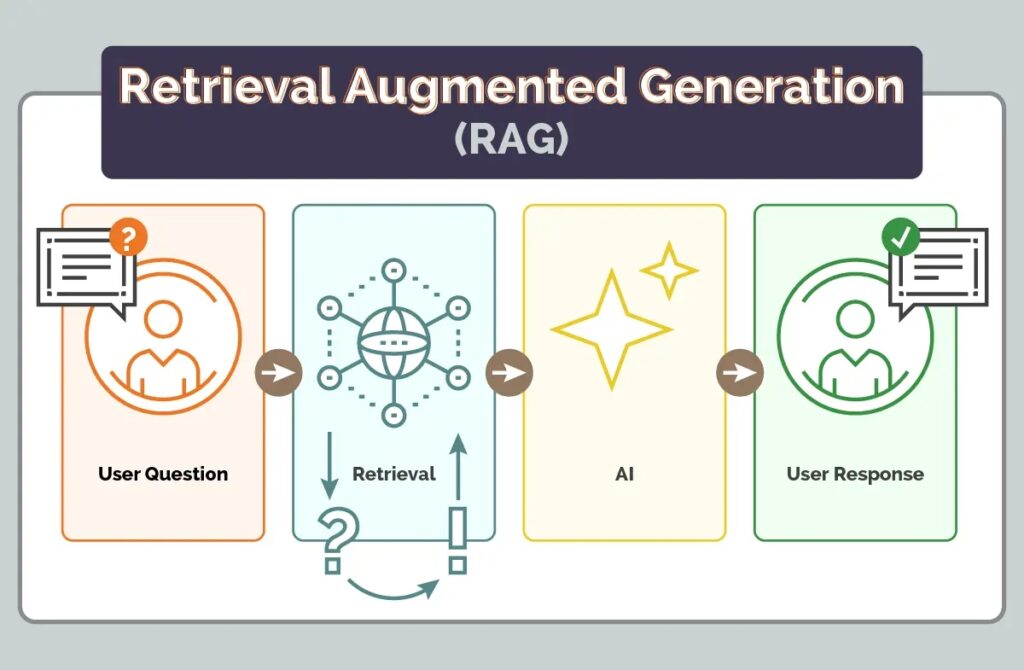

Retrieval-Augmented Generation was introduced as a practical answer to one of the central weaknesses of large language models: their ability to produce fluent, confident text even when they do not know the answer. The core idea is straightforward. Before the model answers, the system retrieves relevant documents. The model is then expected to answer from those documents rather than from its internal training memory alone. In theory, this should reduce hallucinations. In practice, it reduces one class of risk but creates another. A RAG system can retrieve the right document and still generate the wrong answer.

This is the failure that matters in production. The problem is not only that the answer is false. The problem is that it appears grounded. Users have good reason to trust a RAG answer because the system was designed to read the evidence first. When the final response contradicts that evidence, the failure is more dangerous than a normal chatbot hallucination. It carries the appearance of verification without the reality of verification.

That means hallucination control cannot stop at retrieval quality. Better chunking, hybrid search and reranking all matter, but they do not solve the last-mile problem: what did the model actually say? A robust RAG system needs an inspection layer after generation and before delivery. The answer should be checked, scored, repaired where possible and blocked where necessary.

The five failure patterns to watch

The first pattern is overconfident ungrounded language. The model uses phrases such as “clearly stated”, “definitely” or “guaranteed” while making claims that cannot be found in the retrieved context. This is especially risky because the language removes the normal signal of uncertainty. The second pattern is factual contradiction. The source says the return window is 14 days, but the answer says 30 days. The source says the Pro plan costs 120 dollars per year, billed annually, but the answer says 10 dollars per month. The retrieved evidence was present. The model simply failed to follow it.

The third pattern is hallucinated entities. These include invented researchers, institutions, citations, case names or product names. A model may cite “Dr. James Harrison” or an arXiv paper that does not appear anywhere in the retrieved documents. The fourth pattern is negation reversal. The context says a service “does not support cancellation after payment”, while the answer says that it “supports cancellation after payment”. The fifth pattern is answer drift. The same question receives a stable answer for weeks and then silently changes after an index rebuild, a prompt change or a document update. For example, a product price may drift from 49.99 to 39.99 without any system alert.

Inspecting faithfulness, contradiction and entities

A practical hallucination control layer should begin with faithfulness scoring. The answer can be split into factual claim sentences, and each claim can be checked against the retrieved context. The goal is not to demand exact quotation. Natural paraphrase should be allowed. But the central content of each claim must be traceable to the source. If most of an answer cannot be linked to the retrieved evidence, it should not be delivered as a grounded answer.

The second check is contradiction detection. Numbers, dates, percentages, prices, limits and billing periods are common sources of production failures. If the context says “14 days” and the answer says “30 days”, this is not a stylistic variation. It is a contradiction. The same applies to temporal expressions such as monthly versus annual billing. A useful detector should also handle labels carefully. A product code such as SKU-441 contains a number, but it is not a price or a policy value. Without this distinction, the detector will create false alarms.

The third check is entity verification. Named people, organizations, papers and citations in the generated answer should be present in at least one retrieved document. This is where named entity recognition is useful. Regular expressions can catch some patterns, but they often confuse capitalized technical phrases with names. A statistical NER model can reduce false positives, especially when checking people, institutions and citations.

Repairing the answer before the user sees it

Detection is necessary, but it is not enough. A production system must decide what to do next. There are three useful repair strategies. The first is contradiction patching. If the answer says “10 dollars per month” but the context says “120 dollars per year, billed annually”, the system should not only replace the number. It should also normalize the billing language across the sentence. The corrected answer should say that the plan costs 120 dollars per year and is billed annually. Otherwise, the correction may leave behind misleading wording.

The second strategy is entity scrubbing. If the answer contains names or references that cannot be verified in the retrieved documents, the system can remove the affected sentences and add a brief transparency note. If all sentences depend on unsupported entities, the safer choice is not to return a shortened answer but to decline and ask for more reliable source material.

The third strategy is grounding rewrite. When faithfulness is very low, the best response is to rebuild the answer from the most relevant source sentences. This is not a second attempt to be creative. It is a controlled reconstruction from evidence. In high-stakes settings such as legal, medical, financial or administrative systems, the thresholds should be stricter. A safe refusal is better than a fluent but unsupported answer.

Why open, testable systems matter

The most important production rule is simple: log every accepted, repaired and rejected answer separately. A repaired answer is not a normal success. It is a signal that the model repeatedly makes a certain type of mistake. If many answers require contradiction patches, the prompt, retriever or source structure may need redesign. If drift appears after an index update, the retrieval pipeline may be degrading. If unsupported citations appear often, the model may be overusing patterns learned during training.

A useful pipeline follows a clear sequence: retrieve, generate, inspect, score, repair, deliver. This sequence does not eliminate hallucinations at the model level. It limits their passage into the user experience. The goal is not to pretend that RAG systems are perfectly reliable. The goal is to make their reliability observable, testable and auditable.

For public-interest applications, this is also a governance question. We need open datasets, documented corpora, reproducible tests, open-source components and human oversight. A large model is not automatically trustworthy. A system becomes trustworthy when its answers can be traced to sources, its failures can be measured, its corrections can be audited and its limits can be stated honestly.